I got my labs done on Wednesday (2/18) and saw my endo yesterday (2/19). My A1C was 6.7, much higher than the ~6.1 I’ve been seeing. My Dexcom Clarity report indicated an estimated 5.7 A1C with an average BG of 116 for the past 90 days. In range (70-150) 86% of the time and high 11.4%. Only very rarely do my highs go over 200. I’m wondering if this is an anomaly (coming off of holiday season) or if there’s something I can do to improve these numbers. Before I got the Dexcom, I set my meter (FreeStyle on my Omnipod PDM) for #18 strips so my meter would read a bit higher and I could adjust accordingly. I’m wondering if I should return to that strategy.

One of the often repeated sayings on this forum is to just do what works. So if something works, why not?

But you can also adjust your target blood sugar, your IC number, your correction number on your pod too, instead of changing the number for BG values. You can more aggressively bolus and correct on the real BG numbers you are getting.

I think the whole thing is akin to people who set their alarm clock forward a few minutes so they are not late in the morning. I’ve done that, but then when I remember the alarm clock is fast, I start to ignore it…![]()

Also, on the A1C thing. Those aren’t perfect tests. They are not exactly the average of 3 months, they are slightly weighted in that more recent weeks will affect it more than what happened months ago. A few days of high BG right before an A1C can screw it up a bit.

Possibly holidays messed you up a bit. But if you think changing your test strip code will help you, then I think you should do it!

I always cringe a bit when the A1C test is said to “measure average glucose”. ![]() An A1C value is only correlated with an average glucose level, and is not an actual measurement.

An A1C value is only correlated with an average glucose level, and is not an actual measurement.

Here’s a fun study which goes into great detail in how they do that, and what people they need to exclude from the study in order to make the formulas work, e.g. anyone with variable blood glucose, children, pregnant women. They note that it isn’t always accurate for individuals, and that they need to further look at different races, because the formulas were fine tuned and validated using mostly caucasians. Also that African Americans may have different actual mean glucose values.

Just sharing some fun facts. In your case, it sounds like you had found a way to trick your meter into more closing matching your A1C, so that may be worth going back to.

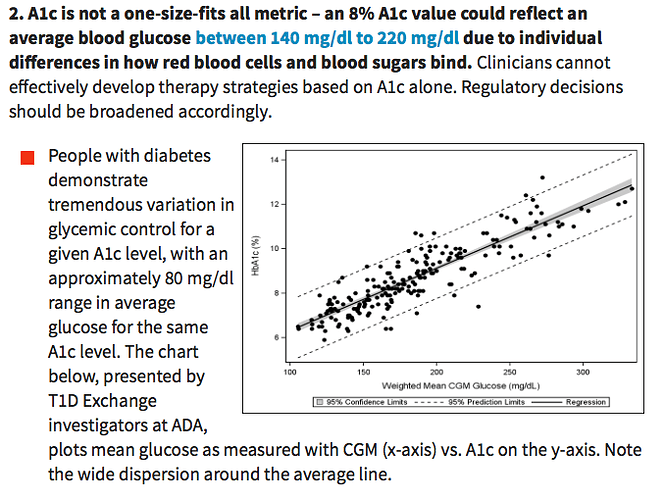

I urge you to read this diaTribe report entitled, Going Beyond A1c. We tend to think that the A1c with it’s X.X% resolution is more precise than it actually is. Did you know that people who measure 6.0% for their A1c can range in average blood glucose from 100-152 mg/dL? It is not a precise indication of our average blood sugar control.

Check out this from the diaTribe report:

My A1c number is usually 0.5% to 1.0% higher than what my CGM indicates for an estimated A1c. The A1c is a good measurement for how a population experiences complication, for instance. Correlating your individual A1c to that population’s experience does not hold precise merit.

I think people with diabetes would be much better off focusing on time-in-range (CGM data) than A1c. It gives a much clearer picture of your glycemia exposure.

Don’t let your last A1c number be the judge of your efforts. It could easily be a lab-anomaly. Just keep your focus on the things that you know influence your blood sugar; food, exercise, and getting enough sleep. Read the report; it’s well worth the effort. Good luck!

Great stuff Terry. Thanks for posting.

As I’ve mentioned before though… it’s not clear to me which is the source of vascular, renal, neural and other complications… transient glucose floating through your veins or an excessive percentage hemoglobin molecules that have become glycosylated (which actually is what’s measured directly by an elevated a1c).

I don’t know the answer. At a glance it seems to me that the vast majority of studies of complication rates have been based on A1C and not true “average glucose” and still show an absolute correlation between A1C and complication rates. So while it’s valid that A1C is far from perfect when it comes to measuring average glucose levels— the question remains, to me, is it likewise an imperfect measure of complication risk, or not?

That would be a great study, whether well calibrated Dexcom readings outperform A1c values at predicting health outcomes. Would read.

A1C is not a perfect test. Different people can end up with different values based on how the glucose binds to the RBC. But for me, the CGM values miss so much. I can spike and be back down before my CGM ever has a clue anything happened. I don’t think my RBCs miss that sort of thing.

One thing I don’t like is when time-in-range is defined as 70-180 mg/dl like the example they gave in the article. I don’t consider 180 to be in range at all. But that’s just my opinion.

Glycosylated hemoglobin is simply a marker for glucose exposure, not the cause itself of high blood glucose complications.

The validity of the A1c number as a correlation for complications is valid only at the population level. The studies can conclude that a group of people with an A1c of say, 8.0%, have a certain risk of developing complications. What cannot be concluded is that one member of that A1c cohort has the same risk level as the group.

Do you think that a person with an average blood glucose of 100 mg/dL has the same complication risk as someone with an average BG of 152 mg/dL? They both have an A1c of 6.0%. The scientific truth of the group does not translate well to each person that comprises the group.

I’ve seen my doctors also use this loose range. I suppose it’s clinically appropriate for some groups, like young children, but I don’t consider it a good target range for me. I use 65-140 mg/dL. I see the 70-180 mg/dL target range as an unambitious goal for me.

How do we know this to be the case?[quote=“Terry4, post:9, topic:58691”]

Do you think that a person with an average blood glucose of 100 mg/dL has the same complication risk as someone with an average BG of 152 mg/dL? They both have an A1c of 6.0%

[/quote]

This is what I am saying I do not know and do not really believe has actually been determined by science to my knowledge . If there are real studies addressing that very question I would be interested in them. I suspect the more widespread use of cgm data will allow a much better view into that subject but will take several decades to bring those answers into clear focus…

Just think about it. High blood glucose does direct damage to all the cells of the body, including the eyes, kidneys, nerves, digestive organs, and peripheral tissues, especially the feet. I can’t cite you chapter and verse of this assertion. It’s been my understanding for many years that the sugar attached to hemoblogin is a good proxy for overall blood glucose exposure since science knows that hemoglogin cells have a known lifetime duration. Since hyperglycemia takes a toll on all tissues, some more than others, I suppose it has some consequence on red blood cells, too.

It is an abundance of just thinking about it that has led me to being unsure if it is simple temporary transient unbonded glucose molecules or glycosylated hemoglobin molecules that expose the same tissues to glucose for much longer periods that is damaging to tissues… or combinations of the two.

A glycosylated hemoglobin molecule is still a glucose molecule… it just happens to be a glucose molecule bonded to a hemoglobin molecule— which gives it much more longevity. Are we supposed to assume that the same glucose molecule that’s so toxic when free floating for just a few hours becomes instantly harmless when it molecularly bonds with a hemoglobin molecule and floats around for months on end ?

I just had my A1c done and it was 6.6. Dexcom clarity estimated 6.1. I always seem to have a spread on mine.

I have to agree with @Sam19 on this one. I agree that what you’ve just stated, @Terry4 is a really reasonable hypothesis and super likely to be right; but the point is that we don’t have data showing that a person with an A1C of 6.0 and an avg BG of 110 has a lower complication rate than someone with the same A1C and a BG of 130, even if we strongly suspect it may be so.

And indeed studies that have looked at glucose variability have not found much correlation either. I am sure in the next 5 to 10 years that data will be out there, as CGM becomes more common, to definitively put this question to rest. And it just stands to reason that having normal blood sugars is better than not. I guess I’m just not convinced we can toss out the A1C yet as an independent marker of complication rate.

Not to mention that A1C is also factoring in other things that could be separately and independently correlated with complication rate. For instance, RBCs that replenish themselves more quickly might lead to a lower A1C because the body is more quickly replacing the red blood cells…which in turn might mean the body overall has more robust repair mechanisms.

Somewhat related to this discussion, but perhaps a bit of a tangent. My CGM numbers are frequently off, but I bring this up as something to ponder and ask the general population here.

I frequently hear of a CGM estimate being somewhat lower than what a person’s actual A1C test came out to be. The OP started off with that. And I saw KCsHubby_Dave say something similar.

I have seen this MANY times on the forum. Many people have said something like “Clarity predicted 5.8 but my test was 6.4…”

So the question is - has anyone ever had a higher Clarity prediction than their actual A1C test?! Just wondering if the Clarity algorithm is just wrong.

Opinions?

Thus far my actual A1c’s are always very close to my Dexcom Clarity estimation. Similarly when I printed out my Diasend compilation report, my average meter BG and my average Dexcom BG were identical. My meter and Dex results are usually close, but it was quite amazing to have them the exact same number. My A1c’s also usually correlate well with my meter average.

My guess is that my glycation rate must be right in the middle of the averages that determine the A1c formula.

One theory I had was that it underestimates lows and highs. So in the high range it often uses some kind of interpolation and if it reads 300, maybe it’s actually 376. And similarly when we see a 40 on Dexcom it’s very very rarely actually a 40 on BG stick, even though in the middle ranges of 80 to about 200, dex is pretty inline with the finger prick.

But that doesn’t really explain how people who spend a ton of time in range and rarely see those 300s or those 40s are getting off numbers.

We’ve experienced Dexcom showing spot-on A1Cs when my son’s average BG was higher. Now that we’ve lowered it, his Dexcom seems to understimate A1C. I’m wondering if it’s because his red blood cells aren’t dying as quickly anymore because they’re not getting damaged as quickly – meaning they have time to acquire the same level of glucose on the outside as they did before. That’s pure speculation, though.

Because it’s just a crude estimate. It’s not like there’s a proven formula that X number of HGBs glycosylate at Y rate at Z Blood glucose from which exerything can be interpolated to an exact result… the problem isn’t really with the estimate it’s only when we think that it’s an exact science that it becomes a disappointment